Homing Guidance for UAVs Using Monocular Vision-Based SLAM

Abstract

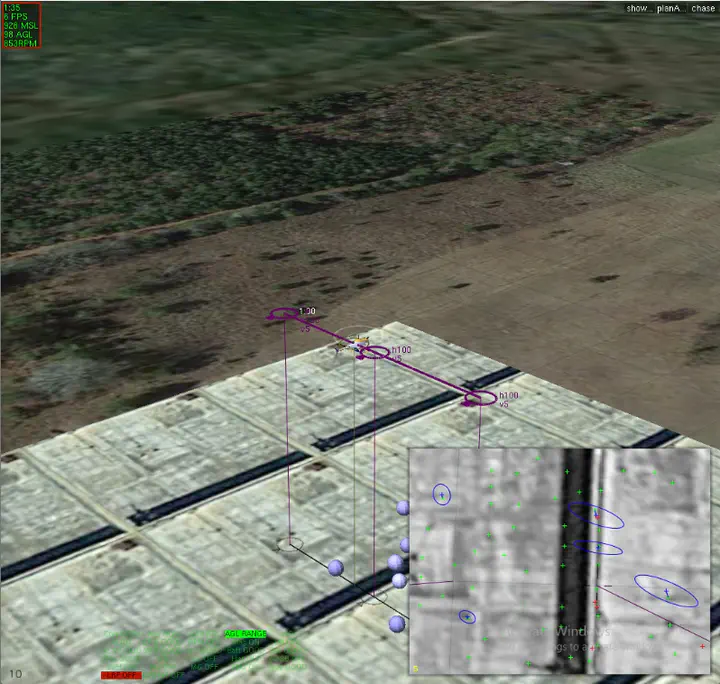

For unmanned aerial vehicles (UAVs) flying in GPS-denied environments, it is often beneficial in terms of size, weight, and power constraints to rely on widely-available monocular cameras for guidance, navigation, and control. In this work, we explore a monocular vision-based simultaneous localization and mapping (SLAM) framework for the purpose of performing a “homing” maneuver towards a platform or other rigid body moving at initially unknown velocity in an unknown environment. The estimation framework relies on a Harris corner detector that generates distinctive “feature points” for a given image, which are used to generate a database of “features” in the environment. These feature point measurements are fused with measurements from an onboard IMU to estimate the ownship state and the velocity of the moving platform. The resulting estimates are used in the homing mechanism. The vision-based estimation and homing framework has been evaluated in a MATLAB simulation and a higher-fidelity simulation with realistic physics, sensors, and synthetic imagery.