Abstract

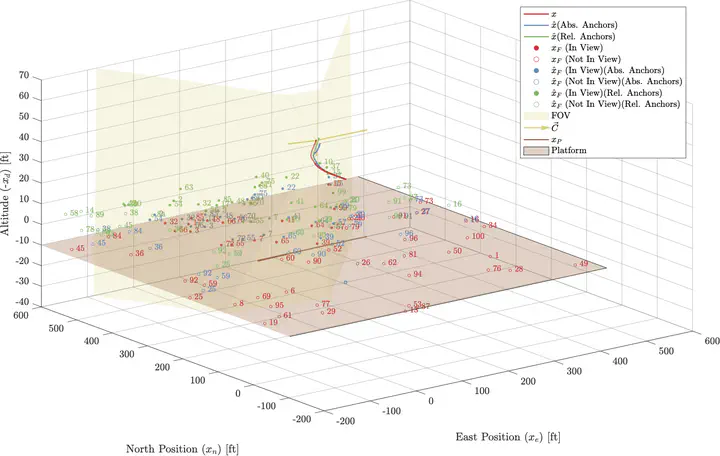

Unmanned aerial vehicles flying in GPS-denied environments often utilize widely-available monocular cameras to aid in guidance, navigation, and control. This work presents a monocular vision-based simultaneous localization and mapping (SLAM) framework that allows a UAV flying in a GPS-denied environment to estimate the position and velocity of a platform or other rigid body whose state is initially not known accurately. The framework uses Harris corners detected in the image stream as measurements that are fused with data from an onboard IMU to inform a Bierman–Thornton Extended Kalman Filter (BTEKF). The filter relies on a database of “features” in the environment. Previous work, including from our laboratory, has stored features in the state vector by using the inverse depth parametrization (IDP) with “anchors” that serve as reference points for the features. This work proposes a novel scheme for storing anchors for the problem of moving platform state estimation, namely using a relative vector rather than the previously established standard, which is the absolute position of the camera upon observation of the feature. The efficacy of the SLAM framework has been demonstrated in a series of MATLAB simulations.